Prisma 5: Faster by Default

Prisma 5 introduces changes that make it significantly faster. These changes especially improve the experience of using Prisma in serverless environments thanks to a new and more efficient JSON-based wire protocol that Prisma Client uses under the hood.

Improved startup performance in Prisma Client

From Prisma 4.8.0, we have doubled down on our efforts to improve Prisma's performance and developer experience. In particular, we focused on improving Prisma's startup performance in serverless environments.

In our quest to improve Prisma's performance, we unearthed a few inefficiencies, which we tackled.

To illustrate the difference since we began investing our efforts in improving performance, consider the following graphs below.

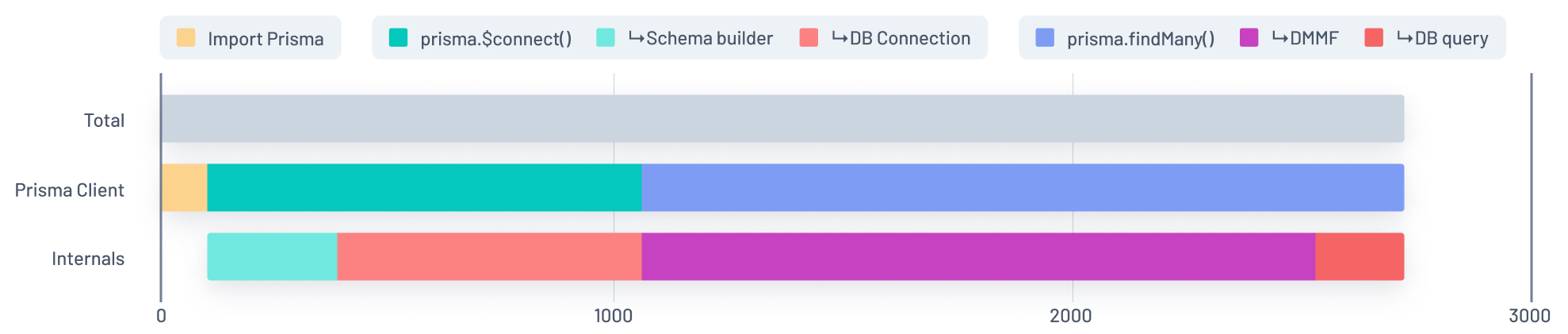

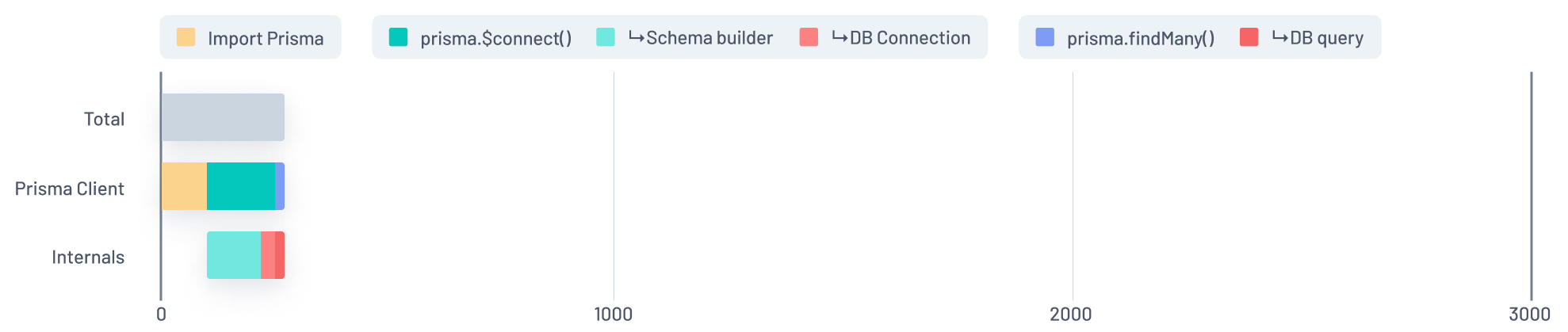

The first graph represents the startup performance of an app deployed to AWS Lambda with a comparatively large Prisma schema (with 500 models) before we began our efforts to improve it:

Before

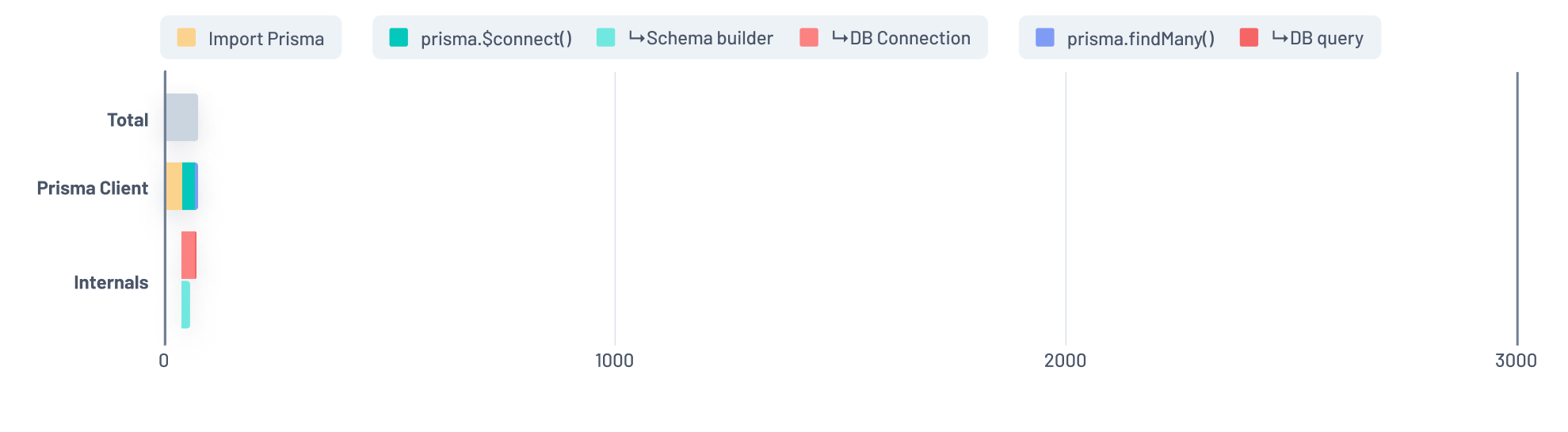

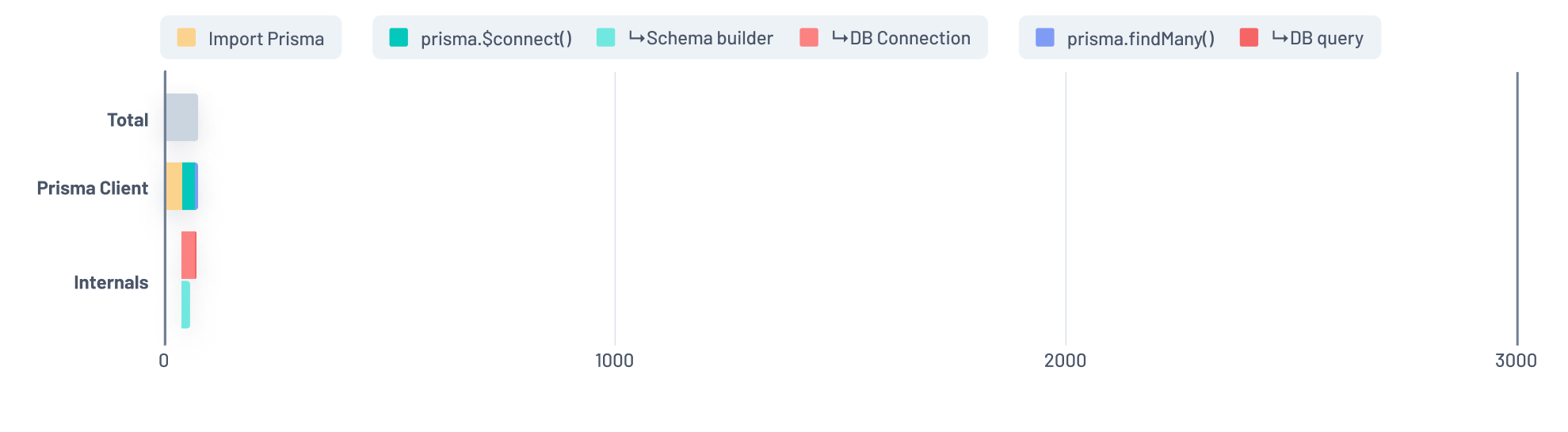

The following graph shows Prisma 5's performance after our work on performance improvements:

After

As you can see, there is a significant improvement in Prisma's startup performance. We'll now dig in and discuss the various changes that got us to this much improved state.

A more efficient JSON-based wire protocol

Prior to Prisma 4.11.0, Prisma used a GraphQL-like protocol to communicate between Prisma Client and the query engine. This came with a few quirks that impacted Prisma Client's performance — especially on cold starts in serverless environments.

During our performance exploration, we noticed that the current implementation added a considerable CPU and memory overhead, especially for larger schemas.

One of our solutions to alleviate this issue was a complete redesign of our wire protocol. Using JSON, we were able to make communication between Prisma Client and the query engine significantly more efficient. We released this feature behind the jsonProtocol Preview feature flag in version 4.11.0.

Before we began any work on performance improvements, an average "cold start" request looked like this:

Before

After enabling the jsonProtocol Preview feature, the graph looked like this:

After

After a lot of great feedback from our users and extensive testing, we're excited to announce that jsonProtocol is now Generally Available and is the default wire protocol that Prisma Client will use under the hood.

If you're interested in further details, we wrote an extensive blog post that goes in-depth into the changes we made to improve Prisma Client's startup performance: How We Sped Up Serverless Cold Starts with Prisma by 9x.

Smaller JavaScript runtime and optimized internals

Besides changing our protocol, we also made a lot of changes that impacted Prisma's performance:

-

With the new JSON-based wire protocol becoming the default, we took the opportunity to clean up Prisma Client's dependencies. This included cutting Prisma Client's dependencies in half and removing the previous GraphQL-like protocol implementation. This reduced the execution time and the amount of memory that Prisma Client used.

-

We also optimized the internals of the query engine. Specifically, the parts responsible for transforming the Prisma schema when the query engine is started and establishing the database connection. Also, we now lazily generate the strings for the names of many types in the query schema, which improves the memory usage of Prisma Client and leads to significant runtime performance improvements.

-

In addition, connection establishment and Prisma schema transformation now happen in parallel instead of running sequentially as they did before.

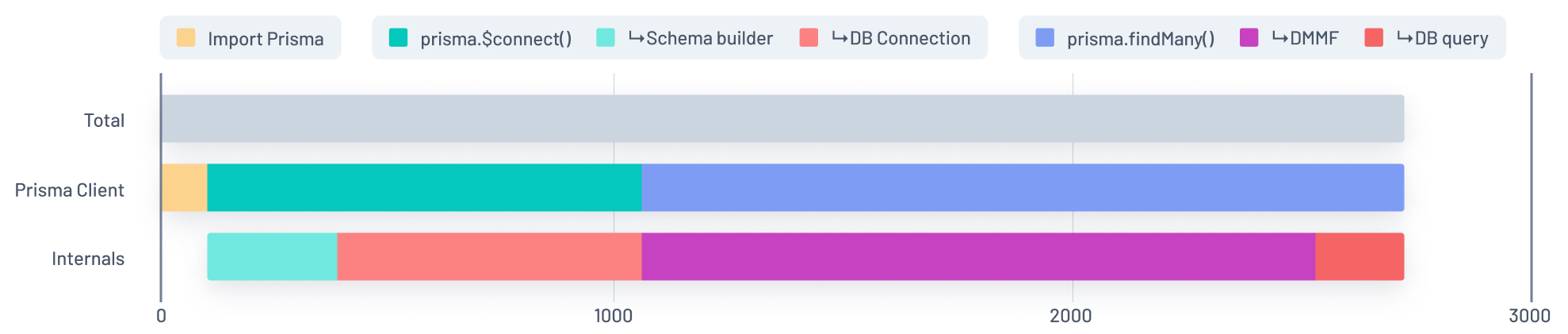

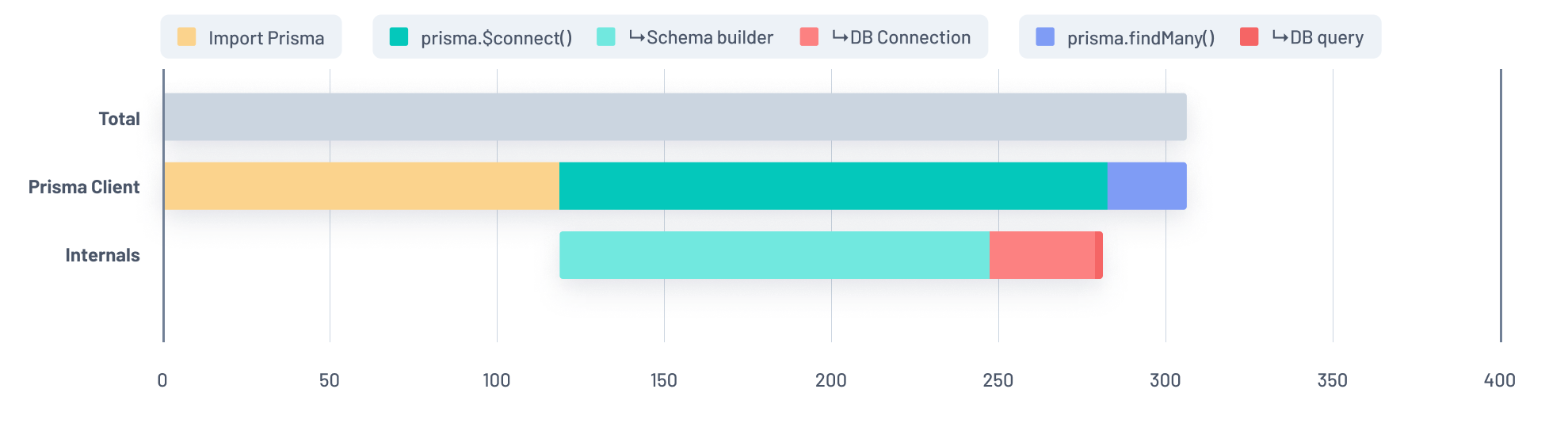

Before we made these three changes, the graph looked like this with the jsonProtocol Preview feature enabled:

Before

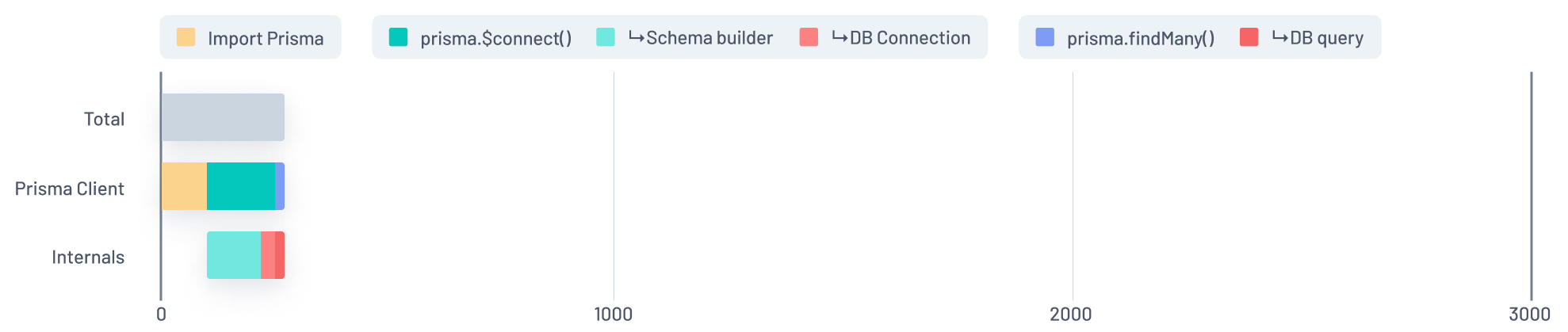

After making these three changes, the response time was cut by two-thirds:

After

The request now leaves a very small footprint.

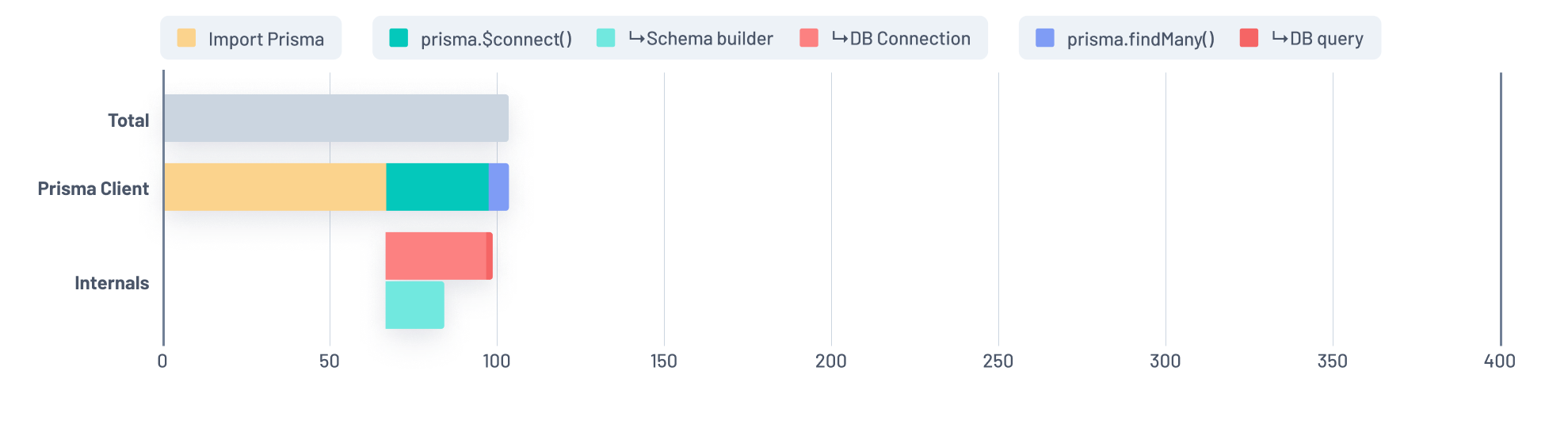

For a zoomed-in comparison on how these changes impact Prisma Client, the first graph shows the impact of the JSON-based wire protocol:

Before: JSON-based wire protocol impact

The following graph shows Prisma Client's performance after we optimized its internals and reduced the size of the JavaScript runtime:

After: Smaller JavaScript runtime and optimized internals impact

Try out Prisma 5 and share your feedback

We encourage you to upgrade to Prisma 5.0.0 and are looking forward to hearing your feedback! 🎉

Prisma 5 is a major version increment, and it comes with a few breaking changes. We expect only a few users will be affected by the changes. However, before upgrading, we recommend that you check out our upgrade guide to understand the impact on your application. If you run into any bugs, please submit an issue, or upvote a corresponding issue if it already exists.

We are committed to improving Prisma's overall performance and will continue shipping improvements that address performance-related issues. Be sure to follow us on X/Twitter not to miss any updates!